Visual Paradigm Desktop |

Visual Paradigm Desktop |  Visual Paradigm Online

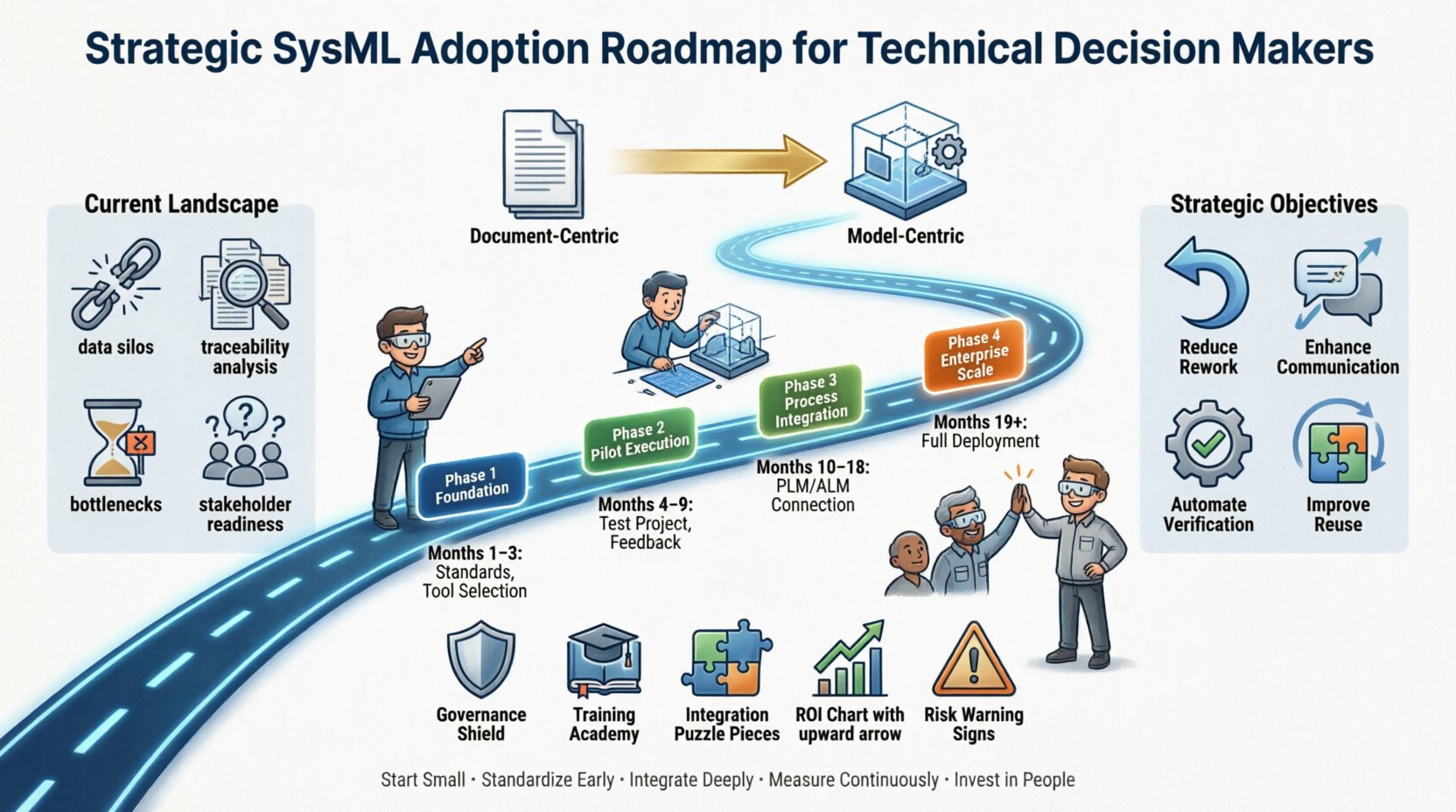

Visual Paradigm OnlineImplementing Systems Modeling Language (SysML) represents a significant shift in how engineering organizations manage complexity. It moves the discipline from document-centric workflows to model-centric practices. For technical leaders, this transition is not merely a software upgrade; it is a fundamental restructuring of information flow, decision-making processes, and verification strategies. This guide provides a structured approach to integrating SysML into enterprise architecture without relying on specific vendor promises.

Before initiating any adoption strategy, a thorough assessment of the existing ecosystem is required. Most organizations operate with a hybrid model where requirements, design, and verification exist in siloed repositories. Spreadsheets, Word documents, and legacy CAD tools often hold critical data that is disconnected from the system architecture. This fragmentation leads to traceability gaps and increases the risk of design errors propagating to later stages.

This diagnostic phase ensures that the adoption strategy addresses actual pain points rather than theoretical improvements. It sets the baseline against which future efficiency gains can be measured.

Adoption efforts often fail because they lack specific, measurable goals. Vague aspirations like “improving engineering” are insufficient. Decision makers must define what success looks like in tangible terms. The objectives should align with broader business goals, such as reducing time-to-market, lowering cost of quality, or enhancing system reliability.

Setting these targets allows for the creation of a governance framework that enforces standards while providing flexibility for different project needs.

A successful rollout rarely happens overnight. It requires a phased approach that minimizes disruption while delivering incremental value. The following table outlines a recommended timeline and focus areas for a typical enterprise environment.

| Phase | Duration | Key Activities | Success Metrics |

|---|---|---|---|

| 1. Foundation | Months 1-3 | Standards definition, tool selection, pilot project selection | Standards document approved; Pilot environment ready |

| 2. Pilot Execution | Months 4-9 | Execute pilot project, gather feedback, refine workflows | Model completeness; Traceability coverage achieved |

| 3. Process Integration | Months 10-18 | Integrate with PLM/ALM systems, expand training | Integration points functional; Training completion rates |

| 4. Enterprise Scale | Months 19+ | Full deployment, continuous improvement, governance audits | Organization-wide adoption; KPI improvement |

The initial phase focuses on establishing the rules of engagement. This involves defining the modeling standards that will govern the organization. What diagrams are mandatory? How are requirements tagged? What is the naming convention for blocks and interfaces? Without these rules, models become inconsistent and difficult to maintain.

Choose a project that is critical but not the most mission-critical one. The goal is to learn. Apply the standards defined in Phase 1 to this project. Encourage the team to document the challenges they face. This feedback loop is crucial for refining the approach before wider rollout.

Once the pilot proves value, the focus shifts to integration. Models must not exist in isolation. They need to connect with Product Lifecycle Management (PLM) and Application Lifecycle Management (ALM) systems. This ensures that model data flows seamlessly into manufacturing and maintenance records.

The final phase involves rolling the methodology out across all major programs. This is where the culture shift solidifies. Regular audits ensure compliance with the established standards. Continuous improvement loops are established to update the standards based on new industry practices.

As the number of models grows, governance becomes the critical factor in preventing technical debt. A model that is never reviewed or updated becomes a liability. A governance framework ensures that the models remain accurate reflections of the physical system.

Effective governance prevents the model from becoming a “black box” where only one person understands the logic. It promotes transparency and shared ownership of the system architecture.

Technology is only as effective as the people using it. A common failure point in SysML adoption is underestimating the training required. Engineers accustomed to text-based requirements often struggle with the visual and logical rigor of modeling.

The goal is to move from “I have to use this tool” to “I use this tool to solve problems.” This shift happens only when the tool is shown to be genuinely helpful in reducing cognitive load and error rates.

Modern engineering environments are complex ecosystems. SysML models must interact with simulation tools, code generators, and test management systems. The architecture of this toolchain determines the efficiency of the workflow.

Investing in a robust integration architecture reduces manual data entry and the associated risk of transcription errors. It allows the model to drive the engineering process rather than just record it.

To sustain funding and support for the SysML initiative, technical leaders must demonstrate return on investment. This requires defining key performance indicators (KPIs) that reflect the value of the modeling effort.

Regular reporting on these metrics keeps the initiative visible and allows for course corrections if the expected benefits are not materializing.

Even with a solid plan, risks exist. Awareness of these risks allows for proactive mitigation strategies.

The engineering landscape is evolving rapidly with the introduction of artificial intelligence, digital twins, and cloud-native architectures. The SysML adoption strategy should be flexible enough to accommodate these future developments.

By keeping an eye on the horizon, decision makers can ensure that the investment in SysML remains relevant and valuable for years to come. The roadmap is not static; it must evolve alongside the technology and the business needs it supports.

Adopting SysML is a journey of continuous improvement. It requires commitment from leadership, investment in training, and a disciplined approach to governance. By following a structured roadmap, organizations can mitigate risks and maximize the benefits of model-based systems engineering.

This approach ensures that the organization builds a sustainable capability rather than simply purchasing a license. The ultimate goal is a more resilient, efficient, and innovative engineering environment where complexity is managed effectively through rigorous modeling practices.