Visual Paradigm Desktop |

Visual Paradigm Desktop |  Visual Paradigm Online

Visual Paradigm OnlineSystems engineering relies heavily on the precision of its models. When working with Systems Modeling Language (SysML), the integrity of architecture deliverables determines the success of downstream implementation. A structured approach to reviewing these models is not optional; it is a necessity for maintaining consistency and traceability across the lifecycle. This guide outlines the essential protocols for conducting effective SysML model reviews.

Model reviews serve as the quality gate between design and execution. Unlike software code reviews which focus on syntax and logic, SysML reviews focus on semantics, structural integrity, and requirement alignment. The goal is to ensure the model accurately represents the system intent before resources are committed to physical realization.

Core Objectives:

Without a standardized protocol, reviews become subjective and inconsistent. Teams often rely on individual expertise rather than established criteria. Adopting a formal protocol reduces risk and improves communication among stakeholders.

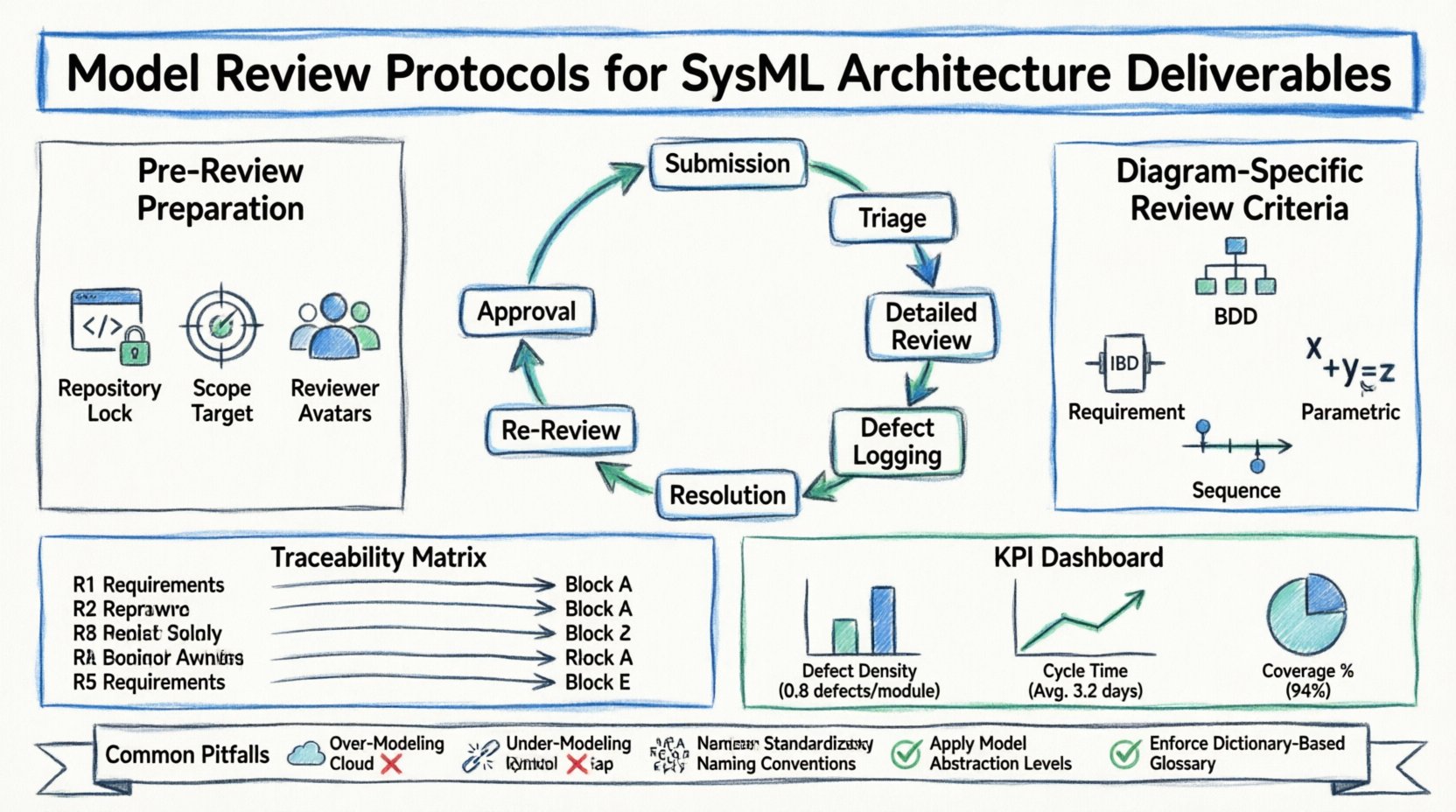

Before initiating a formal review session, specific preparatory steps must be completed. This phase ensures that the model is ready for scrutiny and that reviewers are aligned on the scope.

All participants must have access to the current version of the model repository. Outdated local copies lead to confusion regarding which version is under review. Ensure the model is checked out or locked to prevent concurrent editing conflicts during the review period.

Define exactly which parts of the architecture are in scope. A full-system review might be too broad for a single session. Break down the deliverables into manageable sections:

Select reviewers based on their expertise. A single person rarely possesses the knowledge to review every aspect of a complex system. Assign roles such as:

Different SysML diagrams serve different purposes. Each requires a specific set of checks to ensure the model is valid. The following table outlines the key focus areas for standard diagram types.

| Diagram Type | Primary Focus | Key Validation Points |

|---|---|---|

| Block Definition Diagram (BDD) | Structure and Hierarchy | Correct inheritance, defined properties, clear boundaries, no orphan blocks. |

| Internal Block Diagram (IBD) | Connectivity and Flow | Port types match block types, reference properties defined, flow connectors valid. |

| Requirement Diagram | Traceability | Unique IDs, satisfied by blocks, allocated to functions, no circular dependencies. |

| Parametric Diagram | Constraints and Math | Constraint blocks defined, variables typed, equations consistent, no circular constraints. |

| Sequence Diagram | Behavior and Timing | Correct lifelines, message ordering, clear state transitions, interaction protocols. |

The BDD forms the backbone of the structural model. Reviewers must verify the following:

The IBD details how components interact. This is where integration errors often hide.

Traceability is the most critical aspect of systems engineering.

These diagrams define the mathematical constraints of the system.

Traceability links connect requirements to design elements. This alignment ensures that every requirement is addressed in the architecture. A review must verify the health of these links.

Links should ideally be bidirectional. This means you can trace from a requirement to the design, and from the design back to the requirement. Unidirectional links often lead to gaps where design decisions are not justified by requirements.

Calculate the coverage percentage. This metric indicates how many requirements are satisfied by the current model.

Ensure that requirements are not duplicated. If the same requirement appears twice, it may lead to conflicting updates. Use a unique ID system to prevent this.

A clear governance structure is essential for managing the review process. Without defined roles, accountability is diluted.

| Role | Responsibility | Authority |

|---|---|---|

| Model Owner | Maintains model integrity and updates. | Can modify the model. |

| Reviewer | Identifies defects and suggests improvements. | Cannot modify the model directly. |

| Approver | Validates that review findings are addressed. | Can sign off on the deliverable. |

| Stakeholder | Provides domain feedback and validation. | Cannot modify the model. |

The workflow should follow a linear progression to avoid bottlenecks.

To improve the review process over time, teams must track metrics. Data-driven insights help identify recurring issues and training gaps.

Review data should be analyzed to find patterns. If a specific type of error appears frequently, such as incorrect port types, this indicates a need for additional training or a change in modeling standards.

Reviewers should provide feedback on the review process itself. Are the criteria clear? Is the toolset effective? Continuous improvement of the protocol ensures long-term efficiency.

Architecture models evolve. Changes are inevitable due to new requirements or technical constraints. The review protocol must adapt to manage these changes effectively.

Before approving a change, assess its impact. Does this change affect other parts of the model? A change in one block may require updates to multiple interfaces.

Maintain a clear history of model versions. Each review cycle should correspond to a specific version tag. This allows teams to revert to previous states if a change introduces critical errors.

Formalize the process for requesting changes. A change request should include:

Even with a strict protocol, teams encounter common challenges. Recognizing these early helps mitigate risks.

Creating excessive detail too early wastes time and complicates the model. Focus on high-level architecture first. Refine details only when necessary.

Conversely, providing too little detail leads to ambiguity. Ensure critical interfaces and constraints are explicitly defined.

Using synonyms for the same concept creates confusion. Establish a glossary and enforce it during the review.

Focus often falls on functional requirements. Ensure performance, reliability, and safety requirements are also modeled and traced.

Do not rely solely on automated tool checks. Automation cannot validate semantic meaning or engineering intent. Human review remains essential.

The outcome of a review is not just a corrected model. It is a record of decisions made. Documentation ensures that future teams understand the rationale behind the design.

Document the key findings, decisions, and action items from each review session. This serves as an audit trail.

Use SysML notes to document design rationale within the model itself. This keeps the context close to the relevant elements.

Package the final model with the following:

Model reviews do not exist in a vacuum. They are part of a larger development lifecycle.

Ensure the model is ready for simulation. Reviewers should check if the parametric diagram supports the intended simulation scenarios.

The model serves as the source of truth for implementation. Ensure the model exports cleanly to code or hardware description languages without manual translation.

Verify that the test cases derived from the model match the model content. A mismatch here indicates a breakdown in the verification strategy.

Adhering to these protocols ensures that SysML architecture deliverables are robust and reliable. The process requires discipline, clear communication, and rigorous checking.

Key Takeaways:

By implementing these protocols, engineering teams can reduce risk, improve quality, and accelerate the path from concept to realization. The model becomes a trusted asset rather than a source of uncertainty.